Heterogeneous computing on Android devices

Kai Wolf 19 August 2019

In today’s technical world with all its different devices, ranging from small, intelligent wristwatches, smartphones, tablets and laptops up to large, powerful servers, there exists a wide variety of CPUs, GPUs and DPSs. All those computing elements obviously differ in their hardware capabilities. For instance, a CPU typically has much less cores than a GPU. There are different level of caches, cache sizes, instruction sets or in the case of any modern GPU, there exist specific computing units for special tasks such as shaders or TMUs.

By now, it should have become apparent that writing highly efficient code that makes use of all those various computing capabilities quickly becomes cumbersome, if at all possible. One common solution to this problem is to make use of so called heterogeneous computing frameworks such as OpenCL, CUDA, OpenMP, MPI or in the case of Android: RenderScript.

These frameworks build an abstraction to the underlying hardware and are typically programmed in a dialect of the C programming language. Using one of these frameworks, code can be written in a somewhat device- and architecture independent manner and the framework takes care of executing the code on multiple cores using either shared or non-shared memory or in the case of MPI, it can even run on a distributed cluster of several servers. Hence, choosing a heterogeneous computing framework highly depends on the type of task as well as the target platform as there is no common solution that fits all.

When it comes to Android the variety of different hardware devices is equally complex and manifold. Google’s solution to address this problem is called RenderScript and got first introduced with version 3.0 (Honeycomb). Today, RenderScript consists of a so called compute API, written in a C99-derived language, targeting as well CPUs, GPUs and DSPs. RenderScript is designed to run on all Android devices, independent of the installed hardware. The actual portability depends upon specific device drivers as only the vendor of any device in particular knows exactly how to talk to a specific chip used in that same device. However, Android is distributed with a basic CPU-only driver for RenderScript to ensure a basic compatibility for all devices.

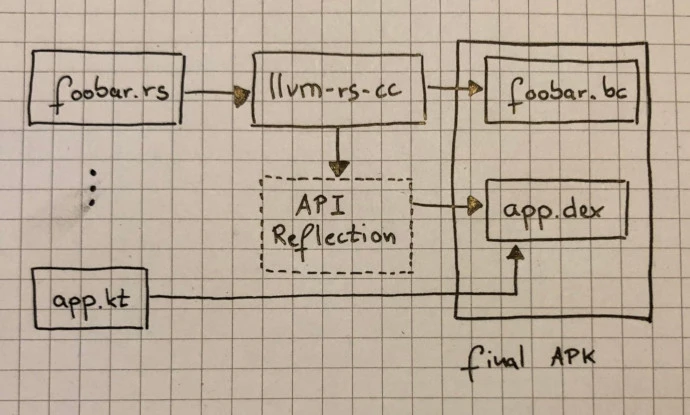

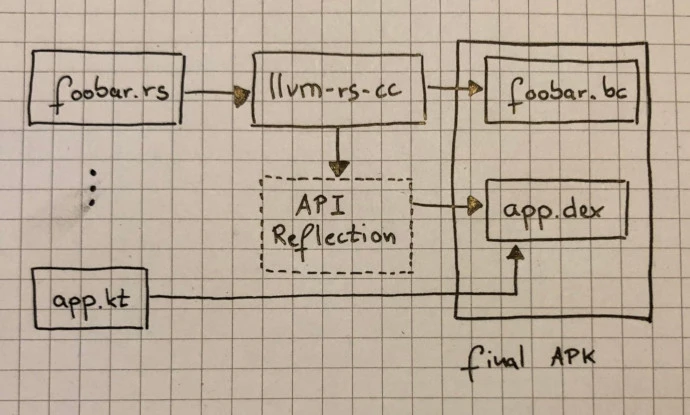

The build process for a given RenderScript kernels happens in two phases: There exists a LLVM-based compiler called slang (llvm-rs-cc) that comes pre-shipped with the Android NDK. This compiler consumes any RenderScript based script containing kernels and emits highly optimized and portable code in IR format (IR stands for intermediate representation) that gets pushed to the device.

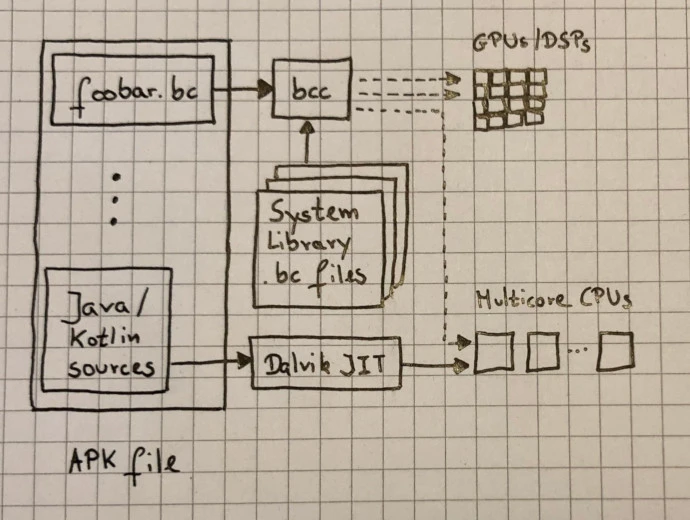

This pipeline has the advantage of performing aggressive, machine-independent optimizations on the developer host machine before the portable bitcode gets pushed to device in order to save both battery and CPU power. Hence, the second phase of the build process can be somewhat more light-weight:

Here, either the online JIT compiler (bcc) takes care of performing target-specific optimizations and code generation that either gets vectorized on a multicore CPU or, if the vendor of that device provided its own RS specific compiler can be ported to an installed GPU or DSP here.

The reasoning behind this design was to provide a generic runtime library while allowing Android device manufactures to provide their specialized .bc library. The implementation makes heavy use of LLVM and provides a lightweight JIT that enables a fast launch time as well as on-device linking.

I’m available for software consultancy, training and mentoring. Please contact me, if you are interested in my services.